In the last few weeks I’ve found myself using Azure Data Studio more, specially the notebooks to organize my scripts in a more explanatory and aesthetically way. About a year ago I wrote a SQL script that I use to backup and restore databases from IaaS to PaaS (Azure VM to Azure Managed Instance) using Azure storage accounts. Then it hit me, why keep dealing with generated SQL wrapped in an XML field if I can have the same scripts generated in a Azure Data Studio notebook. This post covers the process of how I got around the generation of the JSON document needed for the ADS Notebook.

Understanding the Structure

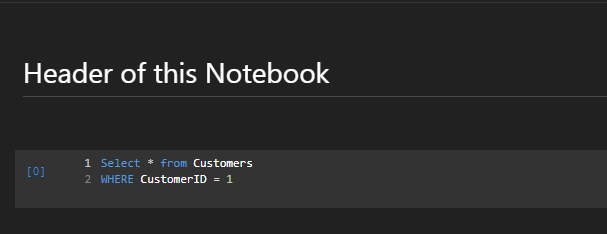

The structure of a IPYNB file is basically a JSON document. It can use HTML or Markup language for Text cells and JSON Arrays to construct a SQL Query. It contains some basic metadata and notebook specific formatting fields followed by an array of cell nodes.

{

"metadata": {

"kernelspec": {

"name": "SQL",

"display_name": "SQL",

"language": "sql"

},

"language_info": {

"name": "sql",

"version": ""

}

},

"nbformat_minor": 2,

"nbformat": 4,

"cells": [

{

"cell_type": "markdown",

"source": [

"# Header of this Notebook"

],

"metadata": {

"azdata_cell_guid": "b4e6057c-bc62-4205-8c61-378b83f6123a"

}

},

{

"cell_type": "code",

"source": [

"Select * from Customers \r\n",

"WHERE CustomerID = 1"

],

"metadata": {

"azdata_cell_guid": "4315dc7a-f4ab-4531-ab23-8c94c8aa0656"

},

"outputs": [],

"execution_count": 0

}

]

}

The SQL Query

As I mentioned before, I took an existing query developed by me about a year ago and converted to a query file to be consumed by a PowerShell script. This query has several options as query parameters.

- Storage Account – Just the name of the storage account.

- Container – Name of the container inside the storage account.

- Replace – BIT field to specify is the restore will replace an existing database.

- Move Location – Specifies the path to where the database files will be placed.

- Backup Files Count – In how many files you want to split your backup?

This script has the mentioned parameters enclosed in << >> to be tokenized in PowerShell during execution.

DECLARE @StorageAccount VARCHAR (MAX) = '<<StorageAccount>>'

, @Container VARCHAR (100) = '<<Container>>'

, @Replace BIT = '<<REPLACE>>'

, @BackupQueryOptions NVARCHAR (MAX) = N' WITH COMPRESSION, STATS = 5 '

, @RestoreQueryOptions NVARCHAR (MAX) = N' WITH STATS = 5, NOUNLOAD '

, @MoveFilesLocation NVARCHAR (MAX) = N'<<MoveLocation>>'

, @BackupFilesCount INT = '<<BackupCount>>'

, @Credential NVARCHAR (300);

SET @Credential = CONCAT('https://', @StorageAccount, '.blob.core.windows.net/', @Container);

DROP TABLE IF EXISTS #Output

, #MoveStatements;

DECLARE @CredentialVerification NVARCHAR (MAX);

IF EXISTS (

SELECT 1

FROM sys.credentials

WHERE name = @Credential

)

BEGIN

SET @CredentialVerification = N'{

"cell_type": "markdown",

"source": ["# Credential: ' + @Credential + N' Exists\r\n",

"- Credential exists but secret key has not been validated. You can visit your Storage Account on Azure to retrieve the secret key. "],

"metadata": {

"azdata_cell_guid": ""' + LOWER(NEWID()) + N'",

"tags": []

},';

END;

ELSE

BEGIN

SET @CredentialVerification

= N'{

"cell_type": "markdown",

"source": ["# Credential: ' + @Credential

+ N' cannot be found. \r\n",

"- The specified credential cannot be found. \n",

"- Use the script below to generate the specified credential\n",

"- Visit you azure portal to retrieve the secret key for the storage accouunt specified"],

"metadata": {

"azdata_cell_guid": "' + LOWER(NEWID()) + N'",

"tags": []

}

},{

"cell_type": "code",

"source": [

"CREATE CREDENTIAL [' + @Credential + N']\n",

"WITH IDENTITY = ''SHARED ACCESS SIGNATURE'',SECRET =''Your SECRET KEY goes here''\n"],

"metadata": {

"azdata_cell_guid": "' + LOWER(NEWID()) + N'",

"tags": []

},

"outputs": [],

"execution_count": 1

},';

END;

SET NOCOUNT ON;

CREATE TABLE #Output

(

DatabaseName VARCHAR (100)

, BackupScript VARCHAR (MAX)

, RestoreScript VARCHAR (MAX)

);

SELECT @RestoreQueryOptions = IIF(@Replace = 1, CONCAT(@RestoreQueryOptions, ',REPLACE '), @RestoreQueryOptions);

DECLARE @BackupQueryStart NVARCHAR (MAX) = N''

, @BackupFiles NVARCHAR (MAX) = N''

, @RestoreQueryStart NVARCHAR (MAX) = N''

, @Date DATE = CAST(GETDATE() AS DATE)

, @Database VARCHAR (100)

, @Url VARCHAR (1000) = '' -- Always leave blank

, @MoveStatement VARCHAR (MAX);

CREATE TABLE #MoveStatements

(

DB sysname

, MoveStatement VARCHAR (MAX)

);

EXEC sp_MSforeachdb '

INSERT INTO #MoveStatements

(

DB

, MoveStatement

)

SELECT ''?'' as DB,(

SELECT '', '' + ''MOVE N''''''+name+'''''' TO N''''''+RIGHT(physical_name,CHARINDEX(''\'',Reverse(Replace(physical_name,''/'',''\'')))-1)+'''''''' FROM ?.sys.database_files df

FOR XML PATH('''')) as MoveStatement ';

UPDATE #MoveStatements

SET MoveStatement = REPLACE(MoveStatement, 'TO N''', 'TO N''' + @MoveFilesLocation + '\');

DECLARE BackupAndRestoreCursor CURSOR FORWARD_ONLY FOR(

SELECT [name]

FROM sys.databases

WHERE database_id > 4);

OPEN BackupAndRestoreCursor;

FETCH NEXT FROM BackupAndRestoreCursor

INTO @Database;

WHILE @@FETCH_STATUS = 0

BEGIN

SET @MoveStatement = '';

SET @MoveStatement = (

SELECT MoveStatement

FROM #MoveStatements AS MS

WHERE MS.DB = @Database

);

SET @BackupQueryStart = N'"BACKUP DATABASE ' + @Database + N' TO\n", ';

SET @RestoreQueryStart = N'"RESTORE DATABASE ' + @Database + N' FROM\n", ';

IF @BackupFilesCount = 1

BEGIN

SET @Url = 'https://' + @StorageAccount + '.blob.core.windows.net/' + @Container + '/' + @Database + '_backup_' + CAST(@Date AS VARCHAR (20)) + '.bak';

SET @BackupFiles = N'"URL = ''' + @Url + N'''\n",';

END;

IF @BackupFilesCount > 1

BEGIN

DECLARE @QueryLoopCount INT = 1

, @LineEnding NVARCHAR (4) = N'';

WHILE @QueryLoopCount <= @BackupFilesCount

BEGIN

IF @QueryLoopCount < @BackupFilesCount

SET @LineEnding = N',';

ELSE

SET @LineEnding = N'';

SET @Url = 'https://' + @StorageAccount + '.blob.core.windows.net/' + @Container + '/' + @Database + '_backup_' + CAST(@Date AS VARCHAR (20)) + '_' + CAST(@QueryLoopCount AS NVARCHAR (4)) + '.bak';

SET @BackupFiles = @BackupFiles + N'

"\t\tURL = ''' + @Url + N'''' + @LineEnding + N'\n",';

SET @QueryLoopCount = @QueryLoopCount + 1;

END;

END;

INSERT INTO #Output

(

DatabaseName

, BackupScript

, RestoreScript

)

VALUES

(

@Database

, @BackupQueryStart + @BackupFiles + '

' + CONCAT('"\t\t', @BackupQueryOptions) + '\n",

' + CONCAT('"\t\t, CREDENTIAL =''', @Credential, '''\n"')

, @RestoreQueryStart + @BackupFiles + N'

' + CONCAT('"\t\t', @RestoreQueryOptions) + N'\n",

' + CONCAT('"\t\t', @MoveStatement) + '\n",

' + CONCAT('"\t\t, CREDENTIAL =''', @Credential, '''\n"')

);

SET @BackupQueryStart = N'';

SET @BackupFiles = N'';

FETCH NEXT FROM BackupAndRestoreCursor

INTO @Database;

END;

CLOSE BackupAndRestoreCursor;

DEALLOCATE BackupAndRestoreCursor;

IF OBJECT_ID('tempdb..#MasterScript') IS NOT NULL

DROP TABLE #MasterScript;

CREATE TABLE #MasterScript

(

BackupScript VARCHAR (MAX)

, RestoreScript VARCHAR (MAX)

);

DECLARE @DatabaseHeader NVARCHAR (MAX)

, @DatabaseName VARCHAR (100)

, @RestoreColumn NVARCHAR (MAX)

, @BackupColumn NVARCHAR (MAX)

, @BackupScript NVARCHAR (MAX) = N''

, @RestoreScript NVARCHAR (MAX) = N'';

SET @BackupScript = N'{"cell_type": "markdown",

"source": [

"# DATABASE BACKUPS"

],

"metadata": {

"azdata_cell_guid": "' + LOWER(NEWID()) + N'",

"tags": []

}

},';

SET @RestoreScript = N'{"cell_type": "markdown",

"source": [

"# DATABASE RESTORES"

],

"metadata": {

"azdata_cell_guid": "' + LOWER(NEWID()) + N'",

"tags": []

}

},';

DECLARE @EndCounter TINYINT = 0

, @DBCount TINYINT = (

SELECT COUNT(DatabaseName)

FROM #Output AS O

)

, @ArrayEnd NVARCHAR (3);

DECLARE MasterScript CURSOR FOR(SELECT * FROM #Output);

OPEN MasterScript;

FETCH NEXT FROM MasterScript

INTO @DatabaseName

, @BackupColumn

, @RestoreColumn;

WHILE @@FETCH_STATUS = 0

BEGIN

SET @EndCounter = @EndCounter + 1;

IF @EndCounter < @DBCount

BEGIN

SET @ArrayEnd = N',';

END;

ELSE

BEGIN

SET @ArrayEnd = N'';

END;

SET @BackupScript = @BackupScript + N'{

"cell_type": "markdown",

"source": [

"## ' + @DatabaseName + N'"

],

"metadata": {

"azdata_cell_guid": "' + LOWER(NEWID()) + N'",

"tags": []

}

},

{

"cell_type": "code",

"source": [' + @BackupColumn + N'],

"metadata": {

"azdata_cell_guid": "' + LOWER(NEWID()) + N'",

"tags": []

},

"outputs": [],

"execution_count": 1

},';

SET @RestoreScript = @RestoreScript + N'{

"cell_type": "markdown",

"source": [

"## ' + @DatabaseName + N'"

],

"metadata": {

"azdata_cell_guid": "' + LOWER(NEWID()) + N'",

"tags": []

}

},

{

"cell_type": "code",

"source": [' + @RestoreColumn + N'],

"metadata": {

"azdata_cell_guid": "' + LOWER(NEWID()) + N'",

"tags": []

},

"outputs": [],

"execution_count": 1

}' + @ArrayEnd;

FETCH NEXT FROM MasterScript

INTO @DatabaseName

, @BackupColumn

, @RestoreColumn;

END;

CLOSE MasterScript;

DEALLOCATE MasterScript;

INSERT INTO #MasterScript

(

BackupScript

, RestoreScript

)

VALUES

(

@BackupScript -- BackupScript - varchar(max)

, @RestoreScript -- RestoreScript - varchar(max)

);

SELECT JSON_QUERY('{

"metadata": {

"kernelspec": {

"name": "SQL",

"display_name": "SQL",

"language": "sql"

},

"language_info": {

"name": "sql",

"version": ""

}

},

"nbformat_minor": 5,

"nbformat": 4,

"cells": [

' + @CredentialVerification + BackupScript + RestoreScript + '

]

}')

FROM #MasterScript;

Now, once the script was finished and all parts were been returned as a properly formatted JSON, is where PowerShell comes handy.

PowerShell

This PowerShell script is very simple. It uses a JSON file to configure options. Using JSON configuration files allows you to change the behavior of the PowerShell job without having to change anything in your PS file. In this case I connected this file to my SQL 2019 Docker container using port 1451 on my local host. Here is an example of the Settings.JSON File.

{

"ConnectionStrings": {

"SQLServer": "localhost,1451",

"UserID": "sa",

"Password": "MyPassword"

},

"OutputSettings": {

"OutputFilePath": "C:\\PowerShell\\BackupRestoreToUrlNotebook\\BackupRestoreNotebook.IPYNB",

"QueryFile": "C:\\PowerShell\\BackupRestoreToUrlNotebook\\BackupRestoreNotebookGenerator.sql"

},

"QuerySettings": {

"StorageAccount": "mystorageaccount",

"Container": "mycontainer",

"Replace": 1,

"MoveLocation": "D:\\",

"BackupFilesCount": 40

}

}The PowerShell script reads the script file (specified on the JSON file) and then runs it against SQL server and exports the results as a IPYNB file to be opened by Azure Data Studio.

clear-host

Write-Host "==========================================================================" -ForegroundColor Green

Write-Host "Backup and Restore Notebook Generator" -ForegroundColor Green

Write-Host "==========================================================================" -ForegroundColor Green

Write-Host ""

Write-Host "Getting Configurations"

$SettingsFile = Get-Content -Path Settings.json | ConvertFrom-Json

If (!$SettingsFile) {

Write-Host "Cannot find configuration file" -ForegroundColor Red

Exit

}

$DataSource = $SettingsFile.ConnectionStrings.SQLServer

$UserID = $SettingsFile.ConnectionStrings.UserID

$Password = $SettingsFile.ConnectionStrings.Password

$QueryFile = $SettingsFile.OutputSettings.QueryFile

$OutputFile = $SettingsFile.OutputSettings.OutputFilePath

$StorageAccount = $SettingsFile.QuerySettings.StorageAccount

$Container = $SettingsFile.QuerySettings.Container

$Replace = $SettingsFile.QuerySettings.Replace

$MoveLocation = $SettingsFile.QuerySettings.MoveLocation

$BackupFilesCount = $SettingsFile.QuerySettings.BackupFilesCount

$ConnectionString = "Data Source=$DataSource; " +

"User Id= $UserID;" +

"Password= $Password;"

Write-Host "==========================================================================" -ForegroundColor Yellow

Write-Host "Connecting to SQL Server: $DataSource " -NoNewline -ForegroundColor Yellow

$Connection = New-Object system.data.SqlClient.SQLConnection($ConnectionString)

$Connection.Open()

if ($Connection.State -eq 'Open') {

Write-Host "| Connected! |" -ForegroundColor Yellow

Write-Host "==========================================================================" -ForegroundColor Yellow

}

else {

Write-Host "Unable to login to target database"

exit

}

$QueryText = Get-Content $QueryFile -Raw

#Replace Query Settings

$CommandText = $QueryText -Replace("<<StorageAccount>>",$StorageAccount.ToString()) `

-Replace("<<Container>>",$Container.ToString()) `

-Replace("'<<REPLACE>>'",$Replace.ToString()) `

-Replace("<<MoveLocation>>",$MoveLocation.ToString()) `

-Replace("'<<BackupCount>>'",$BackupFilesCount.ToString())

$Command = New-Object System.Data.SqlClient.SqlCommand

$Command.Connection = $Connection

$Command.CommandText = $CommandText

$Adapter = New-Object System.Data.sqlclient.sqlDataAdapter

$Adapter.SelectCommand = $Command

$DataSet = New-Object System.Data.DataSet

$Adapter.Fill($DataSet)

$Notebook = $DataSet.Tables[0].Rows[0] | Select-Object -ExpandProperty Column1

$Notebook | Out-File -FilePath $OutputFile

$Connection.Close()

Write-Host "==========================================================================" -ForegroundColor Green

Write-Host "Notebook Generation Complete" -ForegroundColor Green

Write-Host "==========================================================================" -ForegroundColor Green

Results

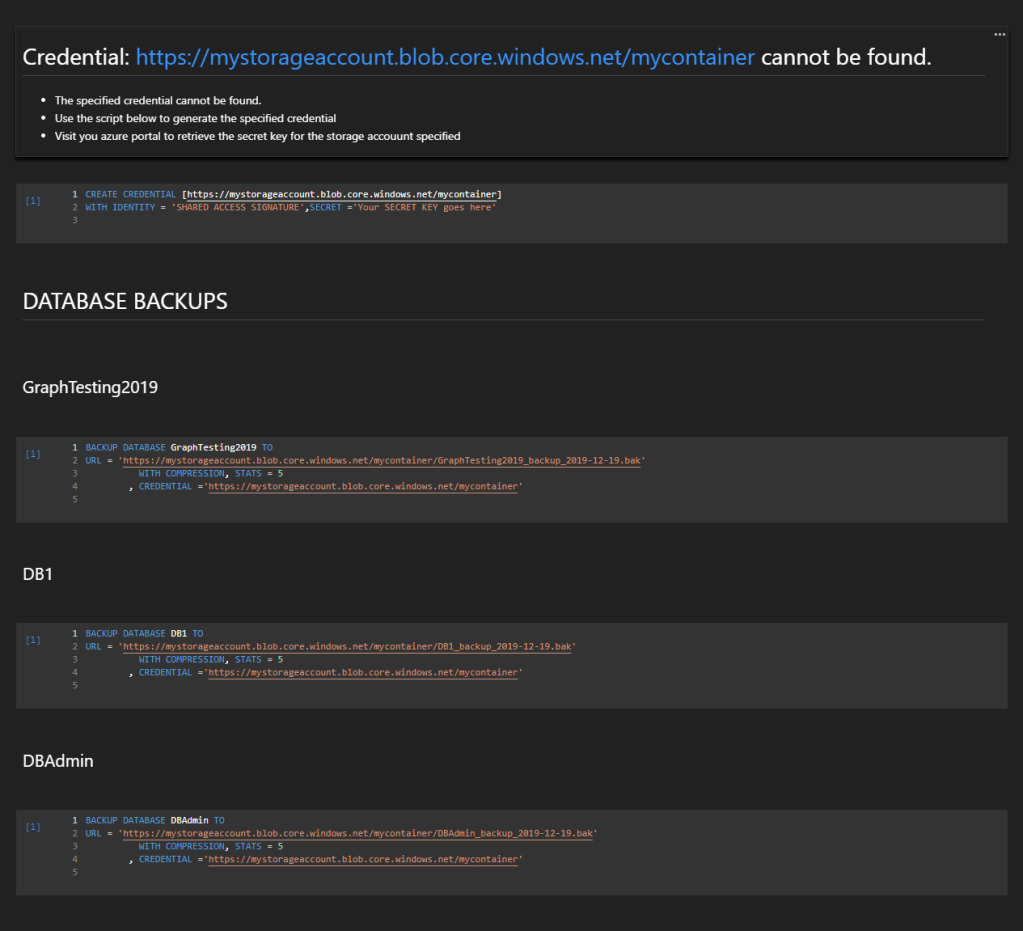

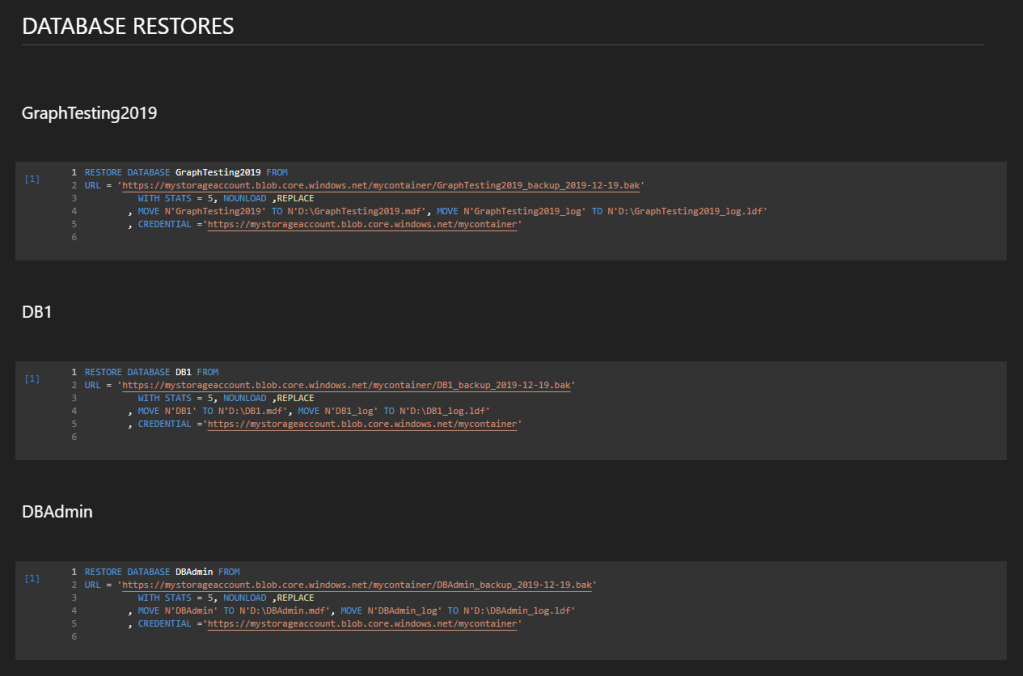

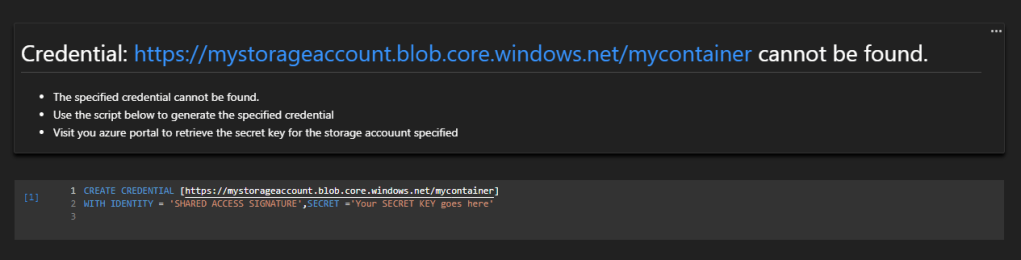

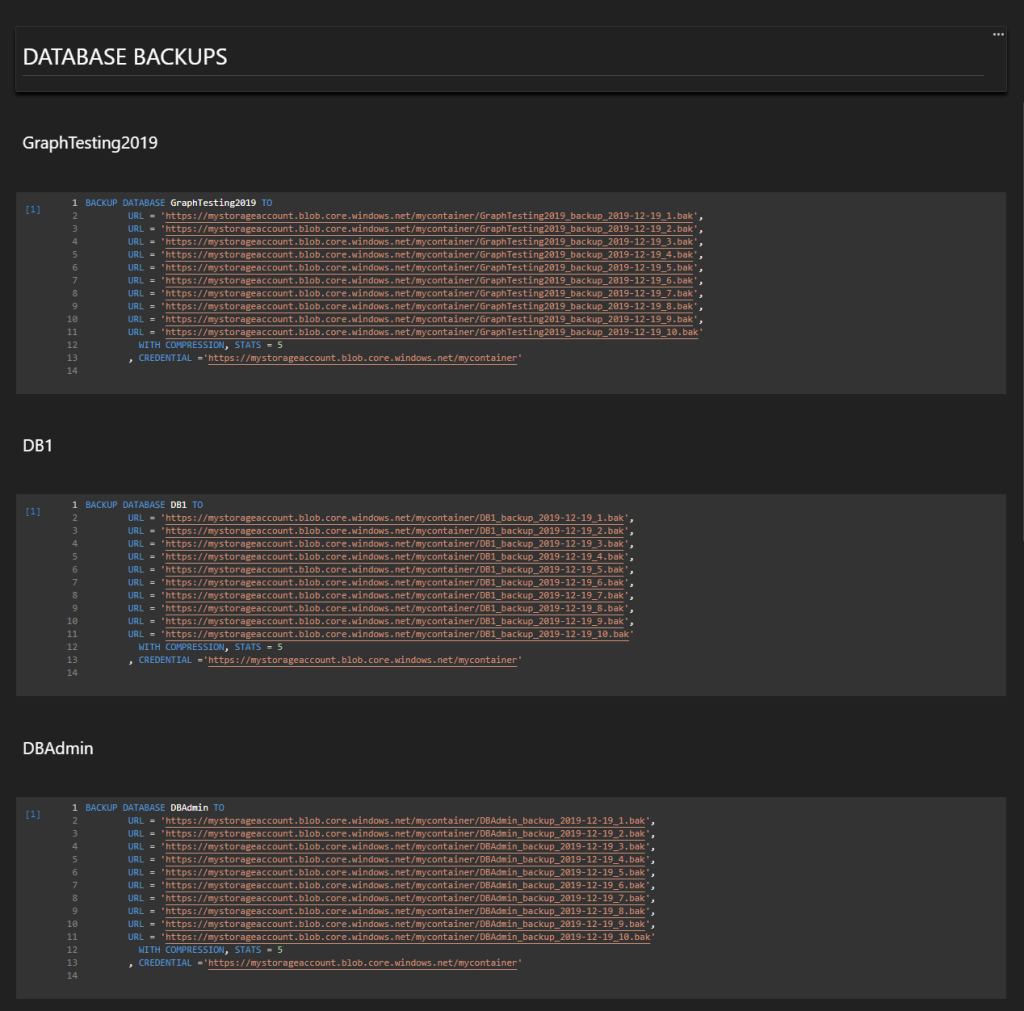

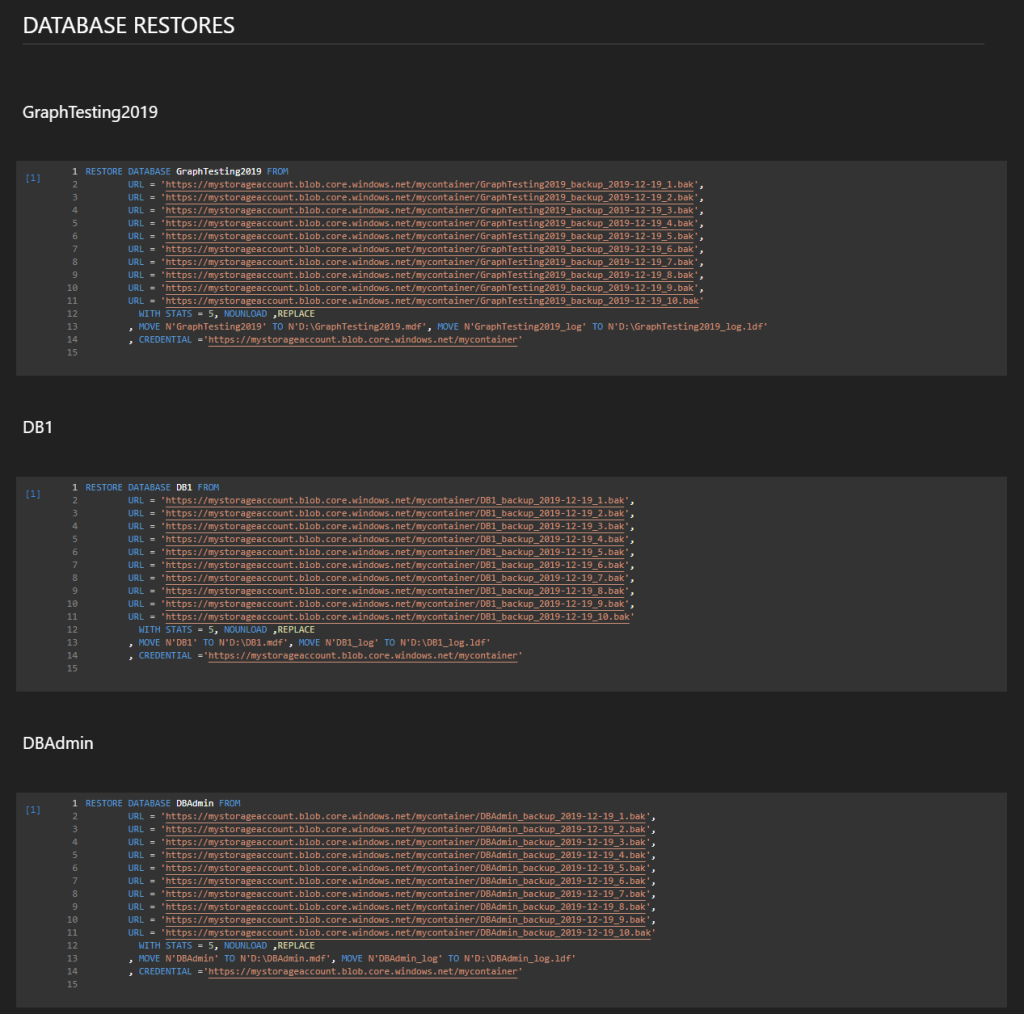

These 3 files (PS, SQL, JSON), generates a notebook with a Credential Check and Instructions on Credential creation if specified credentials are missing. 2 Main Headers 1 for Backups, 1 for Restores followed by Sub-Headers and Code Cells with the Backup and Restore scripts based on the settings defined on the JSON settings file. In this instance there are 3 user databases for testing purposes. Let’s take a look!

Let’s ask for 1 file per backup.

Now for 10 Files per backup

Wrap-Up

This was a very fun exercise and with a very convenient result. As writing the SQL query in the right format has some perks to overcome, being able to generate a fully functional Azure Data Studio Notebook programmatically was very efficient. All the development of this exercise was done in Azure Data Studio, including the PowerShell script. How convenient to have all 3 languages used in this demo in the same software.